Liittyvät kurssit

Näytä kaikki kurssitThe Architecture Of Multi Agent Orchestration

How Software 3.0 Is Moving From Single LLMs To AI Ensembles

For decades, developers wrote explicit instructions telling computers exactly what to do. This was Software 1.0. Then came Software 2.0, where neural networks learned patterns from massive datasets, allowing us to perform tasks like image recognition without writing explicit rules.

Today, we are standing at the edge of Software 3.0. In this new paradigm, we no longer just prompt a single Large Language Model (LLM) and hope for the best. Instead, we design systems of autonomous AI agents that can reason, plan, write their own code, use external tools, and collaborate to achieve complex, high-level goals. Imagine a transition from a system that can merely identify a syntax bug in your code (Software 2.0) to a system that identifies the bug, writes a patch, runs unit tests to verify the fix, and automatically submits a pull request (Software 3.0). This shift moves AI from passive observation to active participation.

The Anatomy Of An Autonomous AI Agent

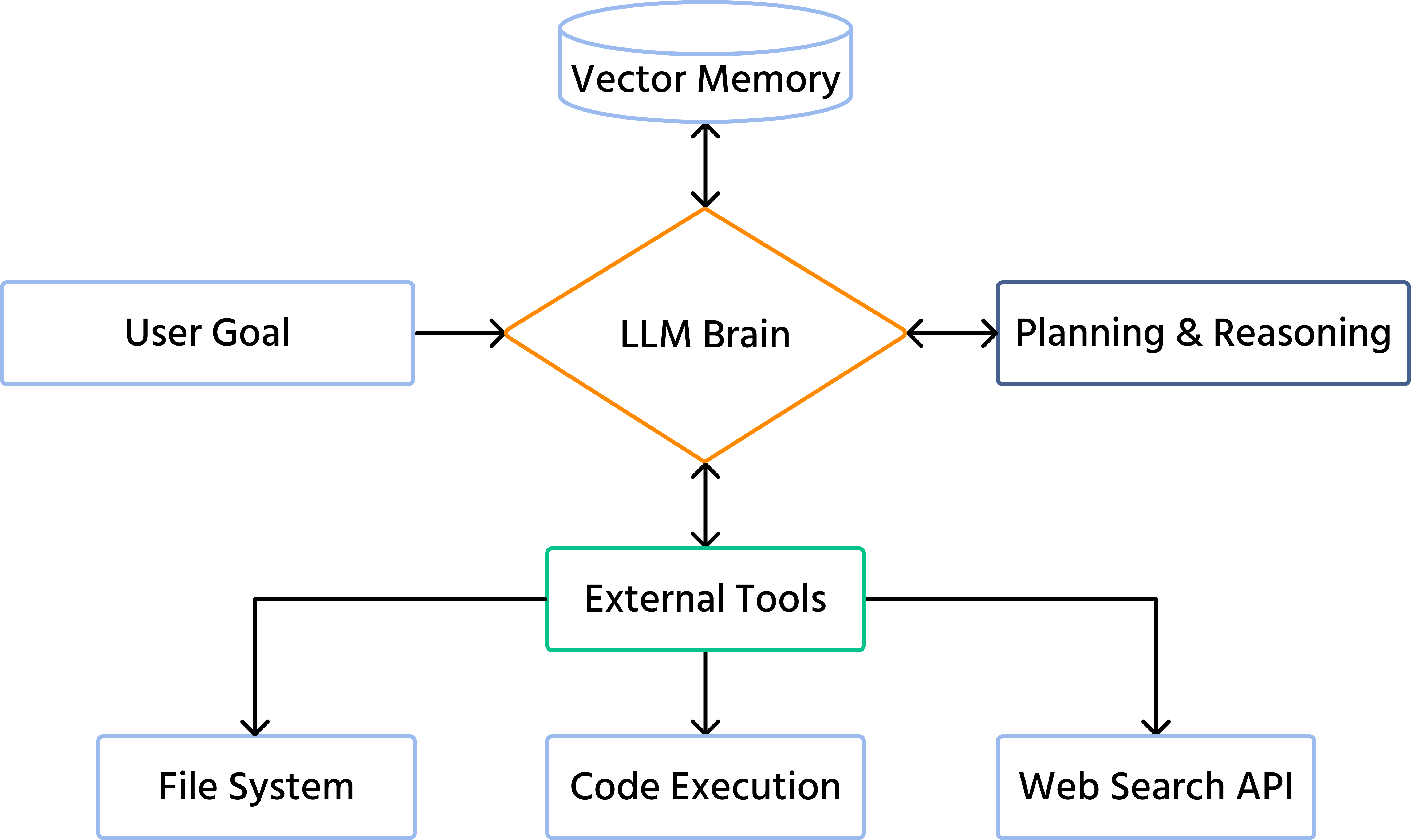

To understand a multi-agent system, we must first look at a single agent. An LLM (like GPT-4 or Claude 3) is just a text-prediction engine. It is the "brain", but a brain in a jar cannot interact with the world. An AI Agent is an LLM wrapped in a systemic architecture that gives it hands, memory, and agency.

A standard autonomous agent consists of four core pillars:

- The LLM core: the reasoning engine that processes natural language and makes decisions. Rather than simply predicting the next word, the LLM evaluates the current state of a problem and determines the logical next step;

- Memory: both short-term memory (the current conversation context) and long-term memory (vector databases storing past interactions and knowledge). Short-term memory acts as the agent's "working RAM," while long-term memory utilizes techniques like Retrieval-Augmented Generation (RAG) to pull relevant historical data, ensuring the agent doesn't forget past mistakes during long tasks;

- Planning: the ability to break a massive goal into smaller, actionable steps using frameworks like ReAct (Reason + Act). The agent explicitly writes down its "Thoughts," takes an "Action," and analyzes the "Observation" from that action before moving forward;

- Tools: external functions the agent can call, such as a Python interpreter, a web search API, or a SQL database connector. If an agent needs to calculate complex math, it doesn't rely on its inherently flawed internal neural weights; instead, it dynamically writes a Python script, executes it, and reads the precise output.

Run Code from Your Browser - No Installation Required

Multi Agent Systems The Ensemble Approach

While a single agent is powerful, it is prone to hallucination, context limits, and losing focus on long-running tasks. In traditional machine learning, we solve complex problems using ensembles (like Random Forests combining multiple decision trees). In Software 3.0, we use Multi-Agent Orchestration.

Instead of asking one agent to build an entire web application, we divide the labor among specialized agents. When a single LLM tries to write HTML, manage database schemas, and configure server deployments simultaneously, its context window becomes cluttered, leading to severe hallucinations and broken code. Dividing the labor reduces the cognitive load on the LLM and dramatically increases accuracy.

Common multi-agent architectural patterns include:

- The router: a lightweight agent that analyzes the user's prompt and routes it to the most capable specialist agent. It acts as a highly intelligent traffic controller, ensuring that a query about frontend styling isn't accidentally sent to the database optimization agent;

- The worker: a specialist agent with a very narrow system prompt and specific tools (e.g., a "Database Agent" that only has access to SQL tools). Because its instructions are highly constrained, a Worker operates with extreme precision and is much less likely to drift off-topic;

- The reviewer: a "critic" agent that takes the output of a Worker and evaluates it against quality standards, sending it back for revision if it detects an error. This creates an internal feedback loop, heavily mirroring the peer-review and QA processes found in professional human engineering teams.

Frameworks Driving The Change

Building these orchestration layers from scratch is incredibly complex, involving state management and infinite-loop prevention. Developers must handle asynchronous communication between agents, manage shared memory contexts, and ensure errors from external tools are gracefully caught and interpreted. Fortunately, a new ecosystem of Software 3.0 frameworks has emerged to handle the heavy lifting.

| Framework | Architecture Concept | Best Used For |

|---|---|---|

| LangGraph | Models agent workflows as cyclic graphs (state machines). | Highly complex, custom applications requiring granular control over state and loops. |

| Microsoft AutoGen | Conversational multi-agent framework. | Code generation and systems where agents "chat" to debate and solve problems. |

| CrewAI | Role-based design (Agents, Tasks, Crews). | Quickly assembling teams of agents for research, content creation, or data analysis. |

Start Learning Coding today and boost your Career Potential

Conclusion

Software 3.0 represents a fundamental shift in computer science. The role of the software engineer is evolving from writing explicit for loops and if statements to designing robust environments where autonomous agents can operate safely. Future developers will spend significantly less time debugging syntax errors and much more time architecting "organizations" of agents. By mastering multi-agent orchestration, developers can build systems that don't just process data, but actively solve open-ended problems, plan architectures, and correct their own mistakes in real-time.

FAQs

Q: Will Software 30 replace traditional software engineers?

A: No, but it changes the abstraction level. Just as compilers abstracted away assembly language, Software 3.0 abstracts away boilerplate code. Engineers will transition into "System Orchestrators," focusing on defining agent roles, writing precise system prompts, managing state, and ensuring security guardrails. Human oversight will remain critical to define business logic, handle ethical edge cases, and ensure the agents are optimizing for the right real-world metrics.

Q: How do you prevent agents from getting stuck in infinite loops?

A: Infinite loops happen when an agent keeps failing a task and retrying the exact same flawed approach without learning. Frameworks solve this by enforcing a strict max_iterations limit, utilizing token budgets, or implementing a "Human-in-the-loop" (HITL) pattern where the agent pauses and asks a human for approval before proceeding. Modern systems also employ "Circuit Breakers," which automatically shut down a specific agent's execution if it repeats the same tool call multiple times without progress.

Q: What is the difference between an LLM and an AI Agent?

A: An LLM is simply a mathematical model that predicts the next word based on input text. An AI Agent is a complete software system that uses an LLM as its reasoning core, but adds memory, planning capabilities, and access to external tools (like code execution or APIs) to take actions in an environment.

Liittyvät kurssit

Näytä kaikki kurssitTämän artikkelin sisältö